AI Video Prompt Guide: Better Scenes, Faster Creation Flow

Explore tested scene scripts, copy proven templates, and turn rough ideas into polished clips across leading generators.

Curated references from official demos and creator case studies

AI Video Prompt Fundamentals for Better Video AI Results

Prompt-First Planning

Build a strong ai prompt before opening any prompt video generator so your first draft is already on target.

Model-Aware Wording

Adapt wording for each video generator to keep prompt to video behavior stable across tools.

Fast Controlled Iteration

Change one variable per run and compare outputs to improve motion, framing, and visual consistency.

Why an AI Video Prompt Library Speeds Up Production

Use real prompt references to move from idea to final render faster, with less guesswork.

Reusable Prompt Patterns

Study prompt ai video generator patterns that consistently produce cleaner action and camera control.

Cross-Model Prompt Comparison

Test one base script as a gemini video prompt, then compare outputs in Kling, Sora, and Vidu AI.

Faster draft to publish Flow

Reuse a template across text to video ai and image to video tests, then keep only high-performing variants.

How to Apply an AI Video Prompt in 4 Steps

Use this practical workflow:

Step 1: Define the Output Type

Choose text to video, ai image to video, or prompt to image ai ideation before drafting.

Step 2: Customize the Base Prompt

Edit subject, action, lens, pacing, and mood so the ai prompt generator output fits your goal.

Step 3: Run prompt to video Variants

Test 2-3 versions in your prompt to video ai generator and compare the opening seconds side by side.

Step 4: Edit and Export

Finalize in video editing tools, add text to video where needed, and trim clips quickly in VLC.

AI Video Prompt Features for Practical SEO Workflows

Core capabilities that help creators, marketers, and teams ship faster.

Copy-Ready Prompt Blocks

Use each prompt as a quick prompt generator baseline, then tailor style and action in seconds.

Model and Context Tags

Reference model type and use-case intent, making video ai generator tests easier to reproduce.

Intent-Based Search

Find prompts by use case, from gemini ai video experiments to product ads and social clips.

Direct prompt to video Launch

Jump from inspiration to generation without rewriting, ideal for free ai video generator validation loops.

AI Video Prompt FAQ for Real Search Queries

Practical answers for creators comparing prompt workflows, models, and free tools.

How can an AI video prompt improve prompt to video output?

Split your script into scene, motion, style, and camera direction. That structure turns a generic prompt ai video idea into a measurable plan and usually lifts consistency in any video generator ai workflow. Use a scoring sheet for subject clarity, camera path, motion smoothness, lighting continuity, and ending coherence to make each iteration objective.

What AI video prompt format works for Gemini video prompt tasks?

For gemini video prompt use cases, keep verbs concrete and timing explicit. A short gemini prompt with clear action order often outperforms long prose; keep separate gemini ai prompt variants for cinematic and product scenes. Use numbered clauses, tight duration ranges, and one style anchor so cross-model comparison stays clear for teams.

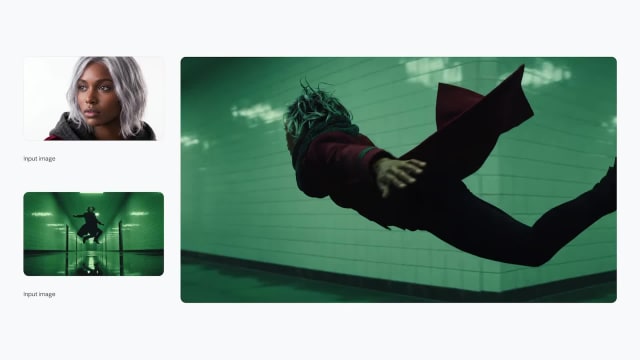

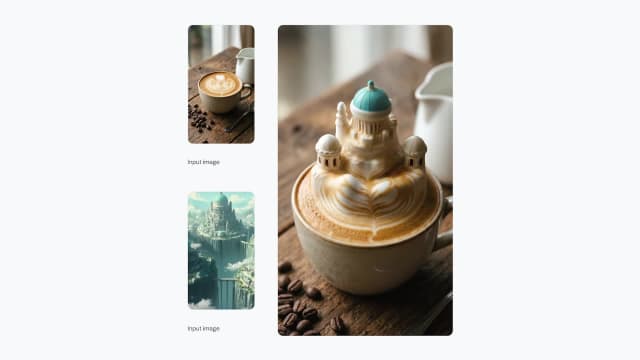

Can an AI video prompt start from image to prompt or image to video?

Yes. Use image to prompt when you already have a frame reference, then map it to image to video ai or photo to video pipelines. If you only have ideas, begin with text to image ai and convert that concept into motion instructions. Add composition, depth, color temperature, lens intent, and movement direction in plain language.

Is an AI video prompt useful for prompt to video ai free tools?

Absolutely. A compact script improves prompt to video ai free runs where generation limits are tight. The same approach also helps in free text to video generator tests and ai text to video free experiments. Run short drafts first, lock the winning structure, then scale duration to reduce wasted credits.

How do I adapt an AI video prompt for Sora, Kling 3.0, and Vidu AI?

Keep one master version, then create model-specific edits. Sora video generator may reward cinematic framing, while kling 3.0 and vidu ai often respond better to explicit motion cues and shot timing. Maintain a compatibility table for lens terms, motion verbs, and style tags, then review monthly after model updates.

Can an AI video prompt be drafted with a ChatGPT prompt or Claude?

Yes. A chatgpt prompt or claude draft is useful as a first-pass prompt generator, but final quality comes from manual edits to camera logic, pacing, and action verbs tailored to your target model. Apply an editing checklist with action order, camera path, timing markers, and negative constraints before each run.

Will an AI video prompt transfer to Dreamina AI, Luma Dream Machine, or Synthesia?

Usually yes, if you normalize language. Replace style-heavy wording with observable actions, then retest in dreamina ai, luma dream machine, synthesia, or similar platforms to find stable phrasing. Concrete nouns, clear verbs, and explicit timing markers usually transfer better between engines with different defaults.

How can an AI video prompt connect video to prompt generator workflows?

Reverse-engineer successful clips using a video to prompt generator or manual notes. Pair youtube video to text, transcribe video to text, video to audio, or audio to text tools to capture timing and rebuild higher-quality prompts. Archive results with timestamp, seed, lens notes, and scene tags to accelerate reuse.

Can an AI video prompt strategy support text to video ai free and paid plans?

Yes. Keep prompt length efficient, test in batches, and compare first-frame clarity. This works across text to video generator ai stacks, free ai video generator options, and enterprise-grade pipelines. For paid tiers, benchmark cost per accepted render and turnaround time against baseline quality scores.

What post-edit steps should follow an AI video prompt generation?

After rendering, refine pacing in video editing tools and handle overlays with add text to video. For quick cleanup, how to trim video in vlc is still useful; for deeper polish, benchmark best free video editors. Apply a finishing checklist for subtitle readability, audio sync, and brand consistency before publish. Add file naming standards, version control rules, and approval gates so delivery stays predictable.

How should an AI video prompt handle noisy keyword trends?

Ignore irrelevant searches such as walmart near me or temp mail when building your content map. If queries like fb video download appear, include them only when your page truly solves that user intent. Cluster terms by creation, editing, transcription, and download goals, then remove mismatched clusters early. Review bounce rate, dwell time, and assisted conversion before scaling. Re-check query logs weekly and deprecate weak intent clusters quickly. Document removals for audit consistency.

Where can I research references after building an AI video prompt?

Combine community and official sources: civitai for experiments, adobe firefly and midjourney for style direction, and freepik, pixabay, or pexels for legal stock assets. Internal link suggestion: workflow hub, editing guide, and model comparison index. External source suggestion: model release notes from official vendor pages. Build a source ledger with author, license, publish date, and usage limits for every asset so compliance checks stay simple during campaign launches.

Should an AI video prompt strategy chase Seedance 2.0 or Comatoze trends?

Treat seedance, seedance 2.0, prompt seen, comatose, and comatoze as signals to validate, not automatic targets. Keep only terms that match real user intent and measurable conversion behavior. Validate with intent checks, click quality, and conversion impact before adding any term to your core plan. Compare seasonal demand, geo differences, and freshness windows so short spikes are not mistaken for durable opportunities.

Which tools should anchor an AI video prompt stack in 2026?

Start with stable options such as google ai and gemini enterprise, then test niche tools like pixverse, fliki, grok ai, luma ai, or novi ai where available. Use notebooklm or notegpt to summarize test logs, track ai news today for updates, and run a monthly benchmark cadence. Keep a shared benchmark sheet with fixed scene briefs, duration targets, motion goals, and acceptance thresholds. Rotate reviewers to reduce personal bias, archive failed attempts with root-cause notes, and document why each winner was selected. Pair this with weekly standups where design, marketing, and growth teams align on intent clusters, landing-page mapping, and conversion hypotheses. Create a reusable operations playbook for your content team. Define naming conventions for each test case, including objective, audience segment, tone, ratio, duration, camera path, and call to action intent. Store all runs in a shared database with tags for industry, funnel stage, and creative direction. Add acceptance gates for clarity, continuity, brand fit, and legal safety. Run weekly retrospectives to review wins, misses, and outliers, then convert lessons into updated templates, checklists, and review rubrics. Set quarterly goals for output quality, turnaround time, and cost efficiency, and track progress in a transparent dashboard. When model behavior shifts, trigger a small recalibration sprint instead of rewriting everything. This process keeps experimentation structured, helps stakeholders trust results, and gives the SEO team stable landing-page narratives aligned with measurable demand.

Create Your Next Clip with an AI Video Prompt

Pick a template, customize it, and launch your next generation run.